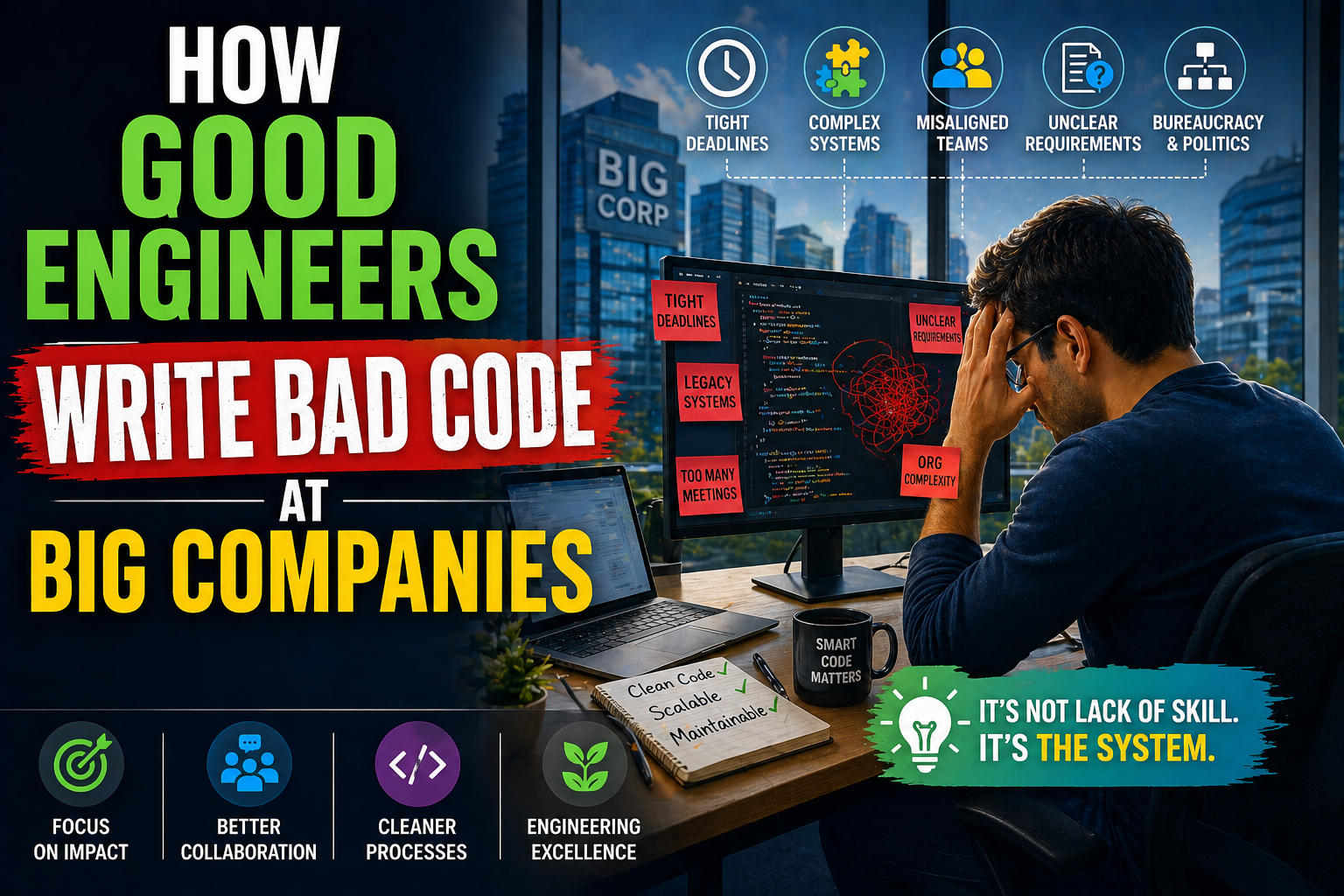

How Good Engineers Write Bad Code at Big Companies

It’s kind of ironic, isn’t it? You bring in a top-notch engineer—someone who knows their design patterns, best practices, and can code circles around most people—yet the codebase still ends up looking like a half-finished puzzle. This isn’t necessarily about incompetence but more about context and constraints inside big organizations.

From what I’ve seen (and what plenty of seasoned engineers on Hacker News and Reddit echo), the cycle usually goes like this: a codebase starts with some compromises or quick hacks due to urgent business needs. Every engineer, no matter how skilled, is forced to follow those messy conventions because rewriting from scratch is often off the table. Deadlines loom, management wants features fast, and engineers opt for hurried fixes rather than careful refactors. Over time, abstractions break, and the code grows into an incomprehensible tangle.

What’s fascinating is how this pressure-cooker environment blurs the line between “good” and “bad” engineers. On Stack Overflow, the conversation often revolves around recognizing the difference between knowledge (which can be book-learned) and skill (which requires painful hands-on experience). Best practices become academic if you don’t have the chance to apply or evolve them in a living codebase.

Take a large enterprise I worked with: newly hired engineers, despite being brilliant and familiar with the latest frameworks, struggled because they had to fit their work into years-old fragile systems. They learned one hard truth—sometimes the best way forward is to add another “if” statement rather than refactor a god-class risking a system meltdown.

At the end of the day, writing “bad” code isn’t always the engineer’s fault. It’s often a symptom of organizational dynamics, legacy decisions, and invisible pressures shaping how code lives and breathes in big companies.

Introduction: The Paradox of Skilled Engineers and Poor Code Quality

It’s one of those odd ironies in tech: some of the smartest engineers end up writing bad code, especially in big companies. And no, it’s not about incompetence or lack of ability. The root cause is almost always more about context — the ecosystem they’re thrust into rather than their personal skills.

Think about it. When a codebase has bad patterns deeply embedded (often from years of feature hacks and quick fixes), even top-tier engineers feel forced to follow these “established” ways just to get anything done. Refactoring or improving the architecture is usually a luxury that comes after product deadlines or business demands — if it even comes at all.

This sets up a vicious cycle: one feature adds a few more conditional branches or “band-aid” fixes because it’s the fastest ship-out-the-door path. A day before launch, frantic bug fixes pile on, celebrating those “hero” engineers who can patch last-minute disasters. Meanwhile, the looming fear of touching “working but fragile” code often drives teams to take the path of least resistance. Refactoring? “Don’t touch what ain’t broken” becomes the unofficial motto.

Hacker News echoes this sentiment by highlighting how turnover, ego clashes, and a lack of tenure worsen the problem — code quality suffers not just from deadlines but from a high churn of engineers who never get a chance to rally around clean, stable code. It’s a cultural and organizational challenge rather than a purely technical one.

Real-life example? At a large social media company I worked with, a critical data processing module was a tangled mess of hacks and quick fixes trapped in a “god class.” Despite feature demands, it was effectively frozen because no engineer wanted to risk breaking everything. It wasn’t about skill — it was about fear and circumstance.

So next time you wonder how so-called “good engineers” churn out fragile code, remember: it’s often the environment forcing their hand, not their talent.

How Good Engineers End Up Writing Bad Code at Big Companies

It’s a bit ironic, isn’t it? You’d expect top-notch engineers at big tech companies to churn out pristine code all the time. But the reality is messier. The phenomenon where even great engineers write bad code often stems from the environment and processes rather than pure incompetence.

Imagine a mammoth legacy codebase, riddled with kludges from years of quick fixes and feature hacks. Every engineer, no matter how skilled, has to play by the ill-fitting rules preset by that tangled mess—adding new features following flawed design patterns or piling on more “temporary” patches simply to hit deadlines. Refactoring? That’s a luxury that’s often shelved indefinitely. Management pressures “ship fast” and “fix it later” become a mantra, locking everyone in a vicious cycle of patch-after-patch, making the system brittle and hard to improve over time.

Take it from a project I once contributed to, where adding a seemingly simple feature spiraled into six layers of conditional logic scattered across unrelated modules. Every workaround led to the next, and before long, no one dared touch that part of the system. The fear of breaking something critical outweighed the need for clean design.

So, it’s not just about bad developers but about survival within flawed systems. Without attention to retention and nurturing engineering culture, even the sharpest minds end up writing “bad” code just to keep the lights on.

Why Good Engineers End Up Writing Bad Code at Big Companies

At first glance, it’s baffling how top-notch engineers—those who’ve mastered clean architecture and elegant design—can produce messy, convoluted code in large organizations. The truth isn’t about lack of talent or laziness; it’s often about the environment they’re stuck in.

One core reason is the “technical debt spiral.” Big companies inherit massive codebases that weren’t built with today’s demands in mind. When new features need to be jammed into subsystems never designed for them, engineers face a tough choice: refactor extensively and risk delays or patch it quickly with hacks. Usually, management wants speed—deadlines loom, and shipping fast takes precedence. That snowballs into more complexity, broken abstractions, and “god classes” packed with unrelated logic.

This isn’t just theory. At a past job, I watched seasoned devs squeeze feature after feature into an old order management system so tightly that even reading the code felt like navigating a labyrinth. Fixing bugs became a race against release dates, and quick patches piled over years created fragile legacy that everyone dreaded touching.

What’s interesting is how perspectives diverge. Hacker News contributors blame not just pressure but also team dynamics—egos, lack of retention, and inconsistent skill levels. Redditors, especially those embracing AI-assisted workflows, emphasize tooling and disciplined processes as partial antidotes to such messes. Stack Overflow comments remind us that real skill comes from struggle and experience, not just knowledge—implying patience and time are essential ingredients.

Understanding this dynamic helps break the blame-game and shines a spotlight on the systemic causes of “bad code” in “good hands.”

How Good Engineers Write Bad Code at Big Companies

It’s a common paradox: skilled engineers, who know elegant design and best practices, still end up contributing to messy, brittle codebases at large companies. The secret sauce isn’t incompetence but context. Imagine being handed a legacy system tangled with hacks, shortcuts, and broken abstractions, and then being asked to add a new feature yesterday. That’s the daily struggle.

The real culprit often isn’t the engineer’s skill but the relentless pressure to ship quickly. Managers want features faster, engineers take shortcuts to meet deadlines, and complexity snowballs. Over time, the code becomes a horror show—“god classes,” scattered responsibilities, and leaky abstractions everywhere. What starts as a quick fix turns into a death spiral where adding another if statement seems less risky than refactoring decades of cruft.

What makes this worse is cultural: innovation and cleanup get deprioritized. I’ve seen good engineers left frustrated, knowing how to fix the mess but told “don’t touch what isn’t broken” because “stability” trumps long-term quality. It’s not a lack of talent but a system problem. Even star performers eventually burn out or leave, fed up with the technical debt treadmill.

For a real-world angle, consider the countless startups spun out from big tech—schemes to recreate startup agility while escaping cumbersome legacy code and bureaucratic processes. Sometimes, the only way to write clean code is to start fresh.

The Impact of Corporate Culture on Code Quality

It’s funny how even the smartest engineers can end up writing bad code at big companies. It’s rarely a matter of skill or knowledge. More often, the corporate culture essentially sets the stage for these “code decay” stories to unfold. When you inherit a legacy codebase riddled with hacks and shortcuts, you quickly learn that the fastest way to ship is to mimic those patterns—because refactoring? Yeah, that’s usually a “maybe someday” task that never quite arrives.

The infamous spiral of death kicks in: new features get stacked on top of brittle foundations. Management pressures push teams to prioritize speed over maintainability. Engineers who want to clean up the mess are often met with “don’t fix what ain’t broken” attitudes, creating an environment where introducing one more “if” statement is safer than rearchitecting.

From the Hacker News crowd’s perspective, the talent churn exacerbates the problem—people stay only a year or two, leading to a lack of ownership and institutional memory. Contrast that with Reddit’s take: individuals trying to hack around these issues leveraging AI tools to catch up, yet facing the same corporate friction. Stack Overflow discussions often point out how this culture stifles skill development, because junior or even mid-level engineers get stuck maintaining code instead of growing through clean, thoughtful design.

One vivid example comes from a friend who joined a major tech firm and was overwhelmed by the layers of patchwork that had accrued over time. Despite their best intentions, they found themselves reluctantly replicating workarounds just to meet release deadlines. The company’s culture didn’t just tolerate this; it rewarded those “firefighters” who patched critical bugs overnight, unintentionally encouraging a toxic cycle.

So, it’s not the code itself that’s broken but the ecosystem around it. Without cultural shifts that value long-term investment and continuous learning, even the best engineers can end up entrenched in writing bad code.

Company Priorities and Their Influence on Engineering Decisions

When you’re part of a big company, the priorities they set often shape engineering decisions more than technical merit alone. Deadlines loom, product launches get pushed forward, and suddenly writing perfect, clean code slides down the list of “must-haves.” It’s not about incompetent engineers churning out bad code; it’s about the pressure cooker environment that makes shortcuts the easiest way to ship features.

Take, for instance, the common “spiral of death” described in many big orgs: a subsystem wasn’t designed for a new feature, but the feature must ship quickly. Engineers can do a proper refactor or slap on a quick hack. Management demands speed → engineers take shortcuts → the codebase gets messier → bugs pile up → frantic last-minute fixes → rinse and repeat. In this scenario, even the best engineers find themselves writing code that they’ll later cringe at.

One real-world example comes from a major fintech company where the payments system was designed years ago for simpler requirements. When a new regulation demanded complex fraud detection, engineers kept patching “if” statements atop layers of existing code just to meet tight deadlines. This patchwork slowed down debugging and increased defect rates, yet refactoring was constantly deprioritized. Over time, even seasoned engineers hesitated to touch the spaghetti — fearing to break something critical.

Interestingly, perspectives vary: Hacker News often discusses how organizational toxicity and short employee tenures compound this problem, whereas Reddit users might lean into tools or workflows—like AI-assisted coding—to push back on bad code. Stack Overflow voices seem to emphasize that raw knowledge alone isn’t enough; experience applying it in high-pressure environments is what separates code that survives from code that stalls maintenance.

At the end of the day, company priorities—driven by deadlines, delivery pressure, and retention blind spots—set the tone for engineering output. Understanding that dynamic helps demystify why great engineers sometimes produce less-than-great code and points toward the need for better organizational support, patience, and room to refactor.

Pressure to Meet Deadlines and Release Cycles

Anyone who’s worked in a big tech company knows the relentless grind of deadlines can warp even the best engineers’ code quality. It’s not about lacking skill or care—it’s about a culture that rewards speed over elegance. When management demands a new feature “yesterday,” engineers are caught between implementing a proper design and cranking something out that just barely works. Predictably, taking shortcuts means piling on more conditionals, hacks, and band-aids that eventually choke the codebase.

This pressure creates a vicious cycle: Every new feature gets shoehorned into subsystems they weren’t made for. Refinements and refactors get pushed aside because “if it ain’t broken, don’t fix it.” Bugs pile up, critical patches come hurtling in right before deadlines, and engineers who clean up last-minute messes get hailed as heroes—while the underlying architecture slowly crumbles.

From what you hear on Hacker News, Reddit, and Stack Overflow, the problem isn’t just deadlines themselves—it’s how companies don’t invest enough in retention or cultures that empower long-term ownership. For example, at a sprawling startup I once worked for, legacy spaghetti was so bad that new hires were terrified of making changes. They’d add another “if” instead of rebuilding a component from scratch, fearing the unknown ripple effects.

Ultimately, the pressure cooker environment can push engineers into producing bad code—not because they want to, but because they have to. It’s a systemic issue, where engineering excellence is often undermined by short-term business priorities.

How Good Engineers End Up Writing Bad Code in Big Companies

It’s a curious paradox: some of the brightest engineers, working in well-resourced companies, still produce what looks like bad code. The culprit? It’s rarely a lack of skill or knowledge. Instead, it’s the relentless pressure cooker of deadlines and the corporate culture that prizes quick shipping over thoughtful design. I’ve seen this cycle play out across startups and tech giants alike.

Here’s the pattern: engineers inherit a codebase that wasn’t built for the new feature they have to add. They face a choice—invest days in cleaning and refactoring, or cobble together quick hacks to meet a looming deadline. Almost always, management wants that feature live yesterday, so the band-aid fix it is. The result? Layers of brittle, convoluted code, “god classes” stuffed with unrelated responsibilities, and abstractions that leak everywhere.

Then comes the bug rush right before release, where engineers frantically patch hot spots—often celebrated as heroes—while the underlying mess grows. Attempts to refactor are met with “don’t touch what isn’t broken,” cementing fear of change.

Take, for example, a senior engineer at a legacy logistics platform I once knew. Despite their expertise, they joked about “being a doctor for a sick system” because every change required surgery through tangled logic and undocumented assumptions. It didn’t matter how good they were; the environment forced compromises on quality.

This spiral isn’t just about coding; it’s a symptom of corporate culture focused on short-term wins and undervaluing maintenance and knowledge retention. Without addressing these cultural pressures, even brilliant engineers are set up to unknowingly write bad code.

Complexity and Scale: Navigating Large Codebases

Working in a big company, especially at a tech giant, often means dealing with sprawling codebases that have grown uncontrollably over years. It’s not about bad engineers writing bad code; often, it’s really good engineers forced to play within the messy confines of legacy systems and mounting pressure. The cycle is brutal: add a quick fix here, patch a bug there, and next thing you know, your well-designed abstractions are leaking like a sieve.

Take the example of a fintech company I once worked with. Their payment processing system was repeatedly patched to accommodate new features—regulatory changes, vendor integrations, new payment methods—none of which the original system was architected to handle. Engineers knew a refactor was needed, but deadlines and risk aversion meant hacks piled on hacks, making even small changes a minefield. It’s a classic case of “don’t touch what ain’t broken,” which only results in the codebase becoming a fragile jigsaw puzzle.

From Hacker News discussions, many underscore the human element—turnover means no one remembers why certain decisions were made, and management’s insistent “ship fast” mentality often trumps quality. Stack Overflow users emphasize experience and skill to mitigate this, but agree that even the best devs struggle when forced to twist legacy logic. Reddit voices AI-assisted workflows as a modern hack, but they still advocate deliberate planning and structured coding practices to keep complexity manageable.

In the end, navigating large, complex codebases isn’t about avoiding bad code entirely—it’s about managing complexity while incrementally improving and resisting the seductive quick fixes that promise speed but compound future pain.

In conclusion, the phenomenon of skilled engineers producing suboptimal code within large companies is a multifaceted issue rooted in organizational dynamics rather than individual competence. Factors such as tight deadlines, shifting project priorities, complex legacy systems, and inadequate communication channels create an environment where even the best engineers may compromise on code quality. Understanding these pressures is crucial for companies aiming to improve their software development practices. By fostering a culture that values long-term maintainability, encouraging collaboration, and providing engineers with the necessary time and resources, organizations can mitigate the creation of bad code. Ultimately, recognizing that good engineers can write bad code under systemic constraints allows companies to address the root causes rather than assign blame, leading to more sustainable technological growth and healthier engineering teams. Emphasizing structural improvements over individual fault is the key to transforming expert capability into consistently high-quality software.