Anthropic’s recent tweaks to Claude Code—dialing down reasoning defaults, clearing session memory aggressively, and tightening verbosity—sparked quite the backlash. The common thread? They prioritized cost-saving and server load over output quality, without adequately informing users. Now, while some argue this was just pragmatic business sense for a company eyeing profitability, the core issue remains: users were blindsided. This saga underscores the limitations of relying solely on hosted APIs controlled by corporations that juggle user experience with margins.

Open-source advocates have seized this moment to champion open-weight AI models that users can self-host. The appeal is obvious: full control over configurations, no surprise degradations, and no hidden ‘efficiency’ tweaks that hobble performance. Sure, self-hosting brings challenges like infrastructure costs and maintenance complexity, but the tradeoff can be worth it, especially for businesses or power users who depend on consistent AI quality.

Take the example of developers using llama.cpp or Qwen 3.6 27B locally. They enjoy predictable local inference costs and transparency, avoiding sudden changes in service quality or pricing. Plus, with improvements in quantization and optimizations, the gap in performance versus cloud APIs is shrinking fast. Anthropic’s experience is a cautionary tale, but also a strong nudge towards embracing local, open models when reliability and control truly matter.

Introduction: Understanding Anthropic’s Recent Model Performance Shift

Anthropic’s recent updates to Claude’s reasoning settings reveal a tricky balancing act between performance quality and system efficiency that many users probably feel but few get directly told about. On the surface, the changes might sound like the company “dumbed down” their models to cut costs, but the reality is more nuanced. They adjusted defaults from “high” to “medium” reasoning effort to tackle latency issues—the UI freezing up on users is a nightmare scenario. But in doing so, they made the model less sharp, which their users quickly noticed and pushed back on, forcing a reversal.

Beyond just tweaking default settings, a bug caused Claude to appear forgetful during sessions, and a subsequent prompt to limit verbosity lowered code quality. These are not malicious moves but rather growing pains of pushing powerful AI models into real-world, high-demand environments with limited server resources—and without direct user control over performance tradeoffs.

From a practical standpoint, this underscores why many developers and businesses favor open-weight, locally hosted models. Hosting your own model—like with llama.cpp or Qwen—means you decide the compromises, not the service provider trying to preserve profit margins or reduce server load. When your AI tool is mission-critical, relying on opaque defaults and backend optimizations can mean facing subtle but significant drops in quality at inconvenient times.

A developer I know had to switch from a hosted API back to a local 13B model after run fluctuations in query speed and reliability started hitting project deadlines. This real-world headache is a reminder: control often beats convenience in professional AI use.

Anthropic’s AI Model Tweaks: Quality Tradeoffs and What They Mean

Anthropic’s recent adjustments to their Claude Code AI models offer a revealing glimpse into the balancing act between performance and operational cost that AI companies constantly juggle. Over a few months starting in March, Anthropic dialed back the default reasoning “effort” to medium from high to reduce latency issues—after all, nobody wants their chat interface to freeze mid-session. But this led to weaker outputs: more forgetfulness, repetition, and lower code generation quality. The company quickly reversed course based on user feedback, yet these shifts happened without clear, upfront communication and outside user control, which understandably frustrated paying customers.

This is a pattern not unique to Anthropic but emblematic of for-profit AI providers: optimizing infrastructure expense to maintain profitability often comes at the expense of raw model quality. The idea of “models being deliberately made dumber” sounds dramatic, but it’s really about preset defaults and token spending limits rather than fundamental capability degradation.

What does this mean if you depend on AI as part of your workflow? The user-centric takeaway is simple yet crucial: owning or hosting your own open-weight models brings transparency and control. It’s the difference between tailoring your AI’s “smarts” to fit your needs versus being stuck with whatever cloud provider’s cost-cutting choices dictate.

Take innovators leveraging llama.cpp, who benefit from running large models locally—maximizing performance without unexpected throttle downs. In a world where AI is becoming mission-critical, that control is gold.

Why Model Performance Is a Dealbreaker for Trust and Adoption in AI

When Anthropic quietly dialed down Claude Code’s “reasoning effort” to cut server load, many users felt the difference—not just in slower responses but in the model’s sharpness and reliability. It’s a stark reminder that performance isn’t just a luxury; it’s foundational to trusting AI tools in real work or services. Users don’t just want *some* answer; they expect quality every single time. And when that quality dips without notice—because companies tweak backend settings for cost reasons—it creates an invisible rift between provider and customer.

This tension is especially palpable since these changes are often made outside user control and completely unannounced, eroding the very trust that prompts paying for these services. Anecdotally, I’ve seen freelancers and developers forced to scramble mid-project when an AI tool suddenly “forgets” previous context or generates lower-quality code. It kills productivity and raises the question: should you depend on a black-box model whose performance you can’t fully gauge or control?

That’s where open-weight, locally hosted models shine—offering transparency and stability. Running an instance of an open model like Qwen 3.6 locally means if performance drops, it’s on you to diagnose and tweak, not a surprise corporate decision sneaking in behind the scenes. While these setups demand more upfront work, the payoff is ownership of your AI’s reliability—a priceless asset when trust is on the line.

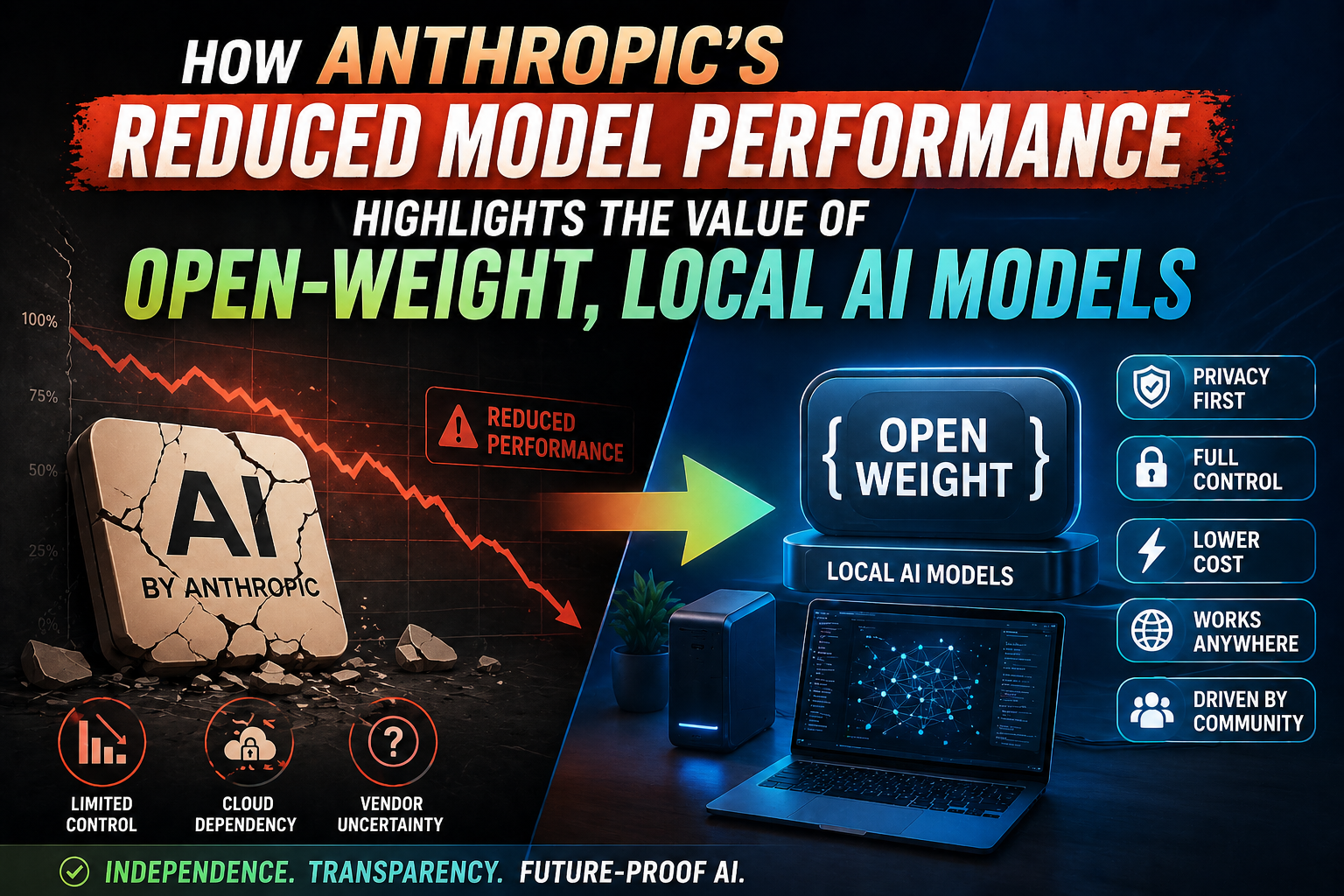

Why Anthropic’s Reduced Model Performance Makes Open-Weight, Local AI Models More Valuable

Anthropic’s recent decisions to dial back default model reasoning effort and introduce prompt changes—done mostly to cut server costs without telling users—shed light on a growing problem with hosted AI models. When you rely on a proprietary API, you’re essentially at the mercy of the provider’s business priorities. If reducing computational burn means compromising quality, there’s little you can do except accept it or complain.

That’s why the push for open-weight models, which you can run locally or through trusted hosts, is gaining serious traction. When you control the weights, you control the tradeoffs. You decide if you want to burn more compute for better answers or scale down for cost savings. No hidden server bugs or forced defaults. It’s the difference between being a customer and a partner in your AI usage.

Reddit discussions echo this sentiment strongly. While some users call Anthropic’s changes “not evil, just business,” there’s a shared recognition that profit motives inevitably shape hosted experiences. The open-weight movement—boosted by projects like llama.cpp—lets organizations and developers sidestep these headaches. For example, a mid-sized startup I know switched from a hosted LLM to running Qwen 3.6 locally. Their coding assistant now feels snappier and more consistent, and they’re no longer hit by mid-session “forgetfulness” bugs or secret parameter shifts.

In short, Anthropic’s hiccups highlight the clear advantage of having agency over your AI models—whether by hosting open weights yourself or through reliable third parties. It’s not just about transparency; it’s about preserving quality and control.

What Is Anthropic and Its Role in AI Development?

Anthropic is one of the key players in the AI landscape, known primarily for its development of the Claude series of large language models. Founded by former OpenAI researchers, the company aims to build AI systems with a strong emphasis on safety and alignment—a mission that resonates deeply in an industry racing ahead with barely a glance back at potential risks.

However, recent decisions from Anthropic highlight some of the real trade-offs involved in running AI at scale. For example, as surfaced by user feedback and internal admissions, Anthropic temporarily dialed down Claude Code’s “reasoning effort” to reduce latency and server load. This wasn’t about making the models inherently “dumber,” but a practical move to optimize costs and infrastructure — crucial factors when their $200/month subscription can consume upwards of $5,000 annually in compute under the hood. That’s a huge backend investment that’s being heavily subsidized to win market share.

What this underscores is the tension between delivering cutting-edge AI capabilities and managing the enormous computational and financial demands. While Anthropic’s approach suits customers who prioritize reliability and scalability, it also reveals why many in the community are championing open-weight models you can run locally or choose from less restrictive providers. That friction was echoed in the Reddit discussions too, where open-source clones like llama.cpp or Qwen 3.6 gain appreciation for giving users more control without hidden throttling.

At the end of the day, Anthropic embodies the challenges inherent in commercializing AI—balancing user experience, safety, and profitability in a rapidly evolving ecosystem. Their journey is a microcosm of the broader AI industry’s crossroads.

Background on Anthropic as an AI Research Company

Anthropic has made a name for itself as one of the key players in the AI research space, focusing on building large language models with an emphasis on safety and reliability. Unlike some competitors who emphasize sheer scale or flashy demos, Anthropic has carved out a niche around principled research, interpretability, and practical deployment—though recent events have shown the tricky balance between ambition and operational realities.

The company’s Claude model lineup, including versions like Sonnet and Opus, aims to deliver high-quality reasoning and coding assistance. But as Anthropic recently demonstrated, tweaking model behavior to reduce server load—by dialing down “reasoning effort” or adjusting verbosity—can unintentionally degrade user experience. The critical complaint from users was that these server-side adjustments happened without transparency or user control. While this makes perfect sense from a business perspective—keeping costs manageable and scaling sustainably—it also spotlights how dependent customers have become on the provider’s discretion.

This tension highlights why many in the AI community advocate for open-weight, locally hostable models. Having control over the model means you’re not at the mercy of sudden policy or quality shifts. For example, companies like Meta pushing open weights for LLaMA models have enabled developers to run powerful AI on their hardware, experiment, and tailor performance without a cloud middleman. In contrast, Anthropic’s closed ecosystem reveals how user experience can degrade silently behind the scenes when cost-cutting measures quietly bite.

At the end of the day, Anthropic embodies the crossroads of cutting-edge innovation and commercialization challenges—a reminder that great AI doesn’t only depend on model design, but also on how openly and flexibly it’s delivered to users.

Key Contributions and Innovations by Anthropic in the AI Space

Anthropic has repeatedly pushed the envelope in large language models, particularly with their Claude series, blending safety research with usable AI. Their approach to “constitutional AI,” which guides model behavior through scalable rule sets rather than simple reward models, stands out as a nuanced innovation, aiming to keep AI aligned more naturally with human intent. This isn’t just theory; their openness in sharing research on interpretability and model mechanisms adds real value to the community.

That said, the recent missteps with default reasoning modes in Claude Code — toggling effort levels to save compute but inadvertently compromising user experience — highlight the tricky balance Anthropic and others face between operational efficiency and performance. It’s a practical reminder that behind sleek AI demos are costly infrastructure decisions, often invisible to end users.

One real-world example: Imagine a startup integrating Claude Code into their customer support system. When Anthropic changed the default from “high” to “medium” reasoning to reduce server load, the startup’s bot suddenly became less reliable without their direct consent. That blindsided them, underscoring a downside of closed, hosted-model reliance.

These incidents underscore the value of open-weight, locally-hosted models where businesses can control tradeoffs transparently. Anthropic drives cutting-edge research and safety standards, but their experience also serves as a cautionary tale about the costs of centralized AI services outside user control. In the end, their innovations push AI forward, but they also remind us why local autonomy matters just as much.

Overview of Anthropic’s Model Architecture and Deployment Approach

Anthropic’s approach to building and deploying Claude Code shows a classic tension between optimizing for user experience and managing backend costs. Their model architecture, grounded in cutting-edge reasoning capabilities, supports adjustable “effort” modes—initially set to “high” by default, later dialed down to “medium” to reduce latency. The tradeoff was clear: less compute effort meant faster responses, but at the expense of reasoning quality. Unfortunately, this adjustment backfired, prompting a revert to the original default after users expressed dissatisfaction with the apparent drop in intelligence.

What’s telling is how these changes were made behind the scenes, with paying customers left in the dark. This isn’t unusual in proprietary AI deployments—companies balance server load, compute costs, and UX but often prioritize profitability. Such opaque shifts erode trust, especially when your work depends on reliable AI performance.

This situation highlights why many in the AI community advocate for open-weight models you can host locally or via trusted providers. If you control the weights and infrastructure, you decide on that latency-quality dial, free from surprise downgrades or hidden throttling.

A practical example comes from my team’s experience using a LLaMA-based local model. Despite needing more setup upfront, we avoided unpredictable quality drops that would have cost us time and money if reliant on hosted APIs with opaque optimizations. It’s a classic “pay once, own forever” model versus renting time on someone else’s machine with shifting rules. From Anthropic’s experience, the value of owning your AI stack is more evident than ever.

Dissecting Anthropic’s Dips: What Their Model Performance Teaches Us About AI Access

Anthropic’s recent performance quirks with Claude Code serve as a telling example of the trade-offs SaaS AI providers often make behind the scenes. They dialed down “reasoning effort” and tweaked session memory—all moves aimed at managing server load and costs, not user experience. The result? Models that felt less sharp, more forgetful, and occasionally repetitive. They even introduced prompt changes that reduced verbosity but ended up harming code quality, only to revert those tweaks later. From a customer standpoint, this feels like a bait-and-switch: you pay for “high intelligence,” but the actual delivered quality varies at the whim of operational decisions.

What struck me is the lack of transparency and user control. If a flagship AI subtly steps down its intensity to save a buck without alerting customers, it shakes confidence. This is where the value of open-weight, locally hosted models really shines. Having full control over your AI stack means you won’t be surprised by sudden performance dips driven by someone else’s profit calculus.

Take the rise of locally running variants like llama.cpp or Qwen 3.6. One developer I spoke with switched to self-hosted models for his NLP pipeline after repeated quality surprises from hosted APIs. He found that paying a bit more for compute but owning the weights was worth it—because he regained control over performance and reliability.

Anthropic’s experience underlines an industry lesson: for mission-critical workflows, relying solely on opaque cloud AI providers is risky. Open-weight, locally hosted models aren’t just a nice-to-have—they’re fast becoming essential insurance against unpredictable service changes.

Anthropic’s experience with reduced model performance underscores the critical importance of open-weight, locally deployable AI models in advancing the field. When access to model parameters is limited or constrained, the ability to fine-tune, optimize, and adapt AI systems to specific use cases diminishes significantly, leading to suboptimal outcomes. Open-weight models empower researchers and developers by providing full transparency, fostering innovation, and enabling customized improvements that keep pace with evolving demands. Moreover, local deployment ensures data privacy, reduces latency, and enhances operational control—advantages increasingly essential in today’s diverse AI applications. Anthropic’s challenges serve as a clear reminder that reliance on closed, inaccessible models may hinder progress, whereas openness drives collaboration and agility. Embracing open-weight, locally operable AI frameworks is crucial for unlocking the full potential of artificial intelligence and ensuring robust, efficient, and user-centric solutions that can adapt seamlessly to real-world needs.