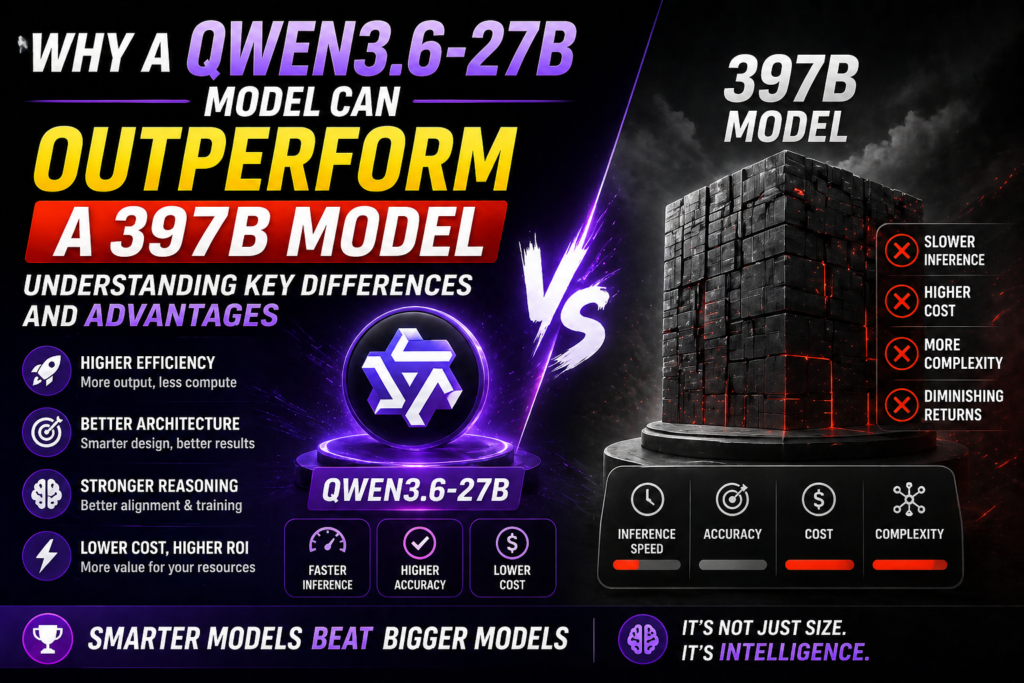

Why a 27B Model Can Outperform a 397B Model: Understanding Key Differences and Advantages

At first glance, it seems almost counterintuitive that a 27 billion parameter model could beat a behemoth with 397 billion parameters. Bigger usually means better, right? Well, not always. The key is understanding what “big” and “better” actually mean in this context.

One simple but important distinction is the model architecture: dense versus mixture-of-experts (MoE). While MoE architectures, like that 397B model, can scale parameters massively, only a fraction of those experts get activated for a given input. This can sometimes make the model brilliant at world knowledge and logical coherence over complex, extended contexts—something the current benchmarks don’t fully capture. However, dense models like the 27B Qwen are consistently activated, which often gives them an edge on more focused, agentic tasks like coding, planning, or specific reasoning.

It’s also about what you’re testing. A smaller, dense model might excel on narrow, interactive tasks, while a massive MoE model shines when you need breadth of knowledge or stamina in long, complicated conversations. Think of it like this: You wouldn’t pit a sprinter against a marathon runner on a 100-mile course and expect the sprinter to win, but give them a 100-meter dash, and it’s a different story.

Take the anecdote from advanced users praising Claude 4.6—a smaller tuned system with a deliberate pace and creativity—over its larger, rushed successor. It’s a reminder that size isn’t everything; optimization, consistency, and task fit matter just as much. Designers and users alike need to focus on the right metrics rather than just parameter counts.

Introduction: Setting the Context for Model Size and Performance

When it comes to AI models, bigger often feels like better. You’d naturally expect a 397-billion parameter model to outpace a 27-billion one, right? Well, not always. This assumption trips up a lot of folks because sheer size doesn’t guarantee superior performance across the board. Dense models and Mixture of Experts (MoE) architectures play a big role in this puzzle.

MoE models, like that huge 397B beast, spread their capacity among numerous expert sub-models. Intuitively, that means they should handle a vast scope of knowledge and complex reasoning better. And indeed, community insights confirm that larger MoE models boast impressive world knowledge and logical coherence, especially with long, intricate contexts. However, their strengths might not show up on standard benchmarks which often emphasize more straightforward tasks or niche skills.

In contrast, smaller dense models, such as the 27B Qwen, pack all their parameters uniformly into one model, which can translate to better steady “agentic” skills like complex planning or coding tasks. People running high-stakes projects often find these dense models feel more reliable and thoughtful, even if they lack the encyclopedic depth of their larger cousins.

Think of it like a Swiss Army knife versus a toolbox. The 397B MoE is the vast toolbox with many specialized tools that excel in knowledge-heavy jobs, while the 27B dense model is a sharp Swiss Army knife that cuts through specific tasks with surprising dexterity. For certain real-world applications—like detailed project planning or creative writing—a smaller, well-tuned dense model might just feel faster, sharper, and less prone to unexpected stumbles.

The key is understanding what you’re asking the model to do and how the evaluation reflects those needs. After all, a bigger brain doesn’t always mean better decisions in every scenario.

Overview of AI Model Sizes and Common Assumptions

When it comes to AI models, the conventional wisdom tends to be “bigger is always better.” After all, a 397 billion parameter model sounds like a monstrous brain compared to a “mere” 27 billion parameter one, right? But as we’ve seen in practice, size alone rarely tells the full story. The 27B dense model outpacing a 397B MoE (Mixture of Experts) model challenges some of these assumptions, and it’s worth digging into why.

The key lies in what those extra parameters are actually doing. MoE architectures spread their capacity across many specialized “experts,” which can be powerful but also inefficient if the routing doesn’t match the task well. Reddit users highlight how the larger MoE model shines in extensive world knowledge and handling complex, long-context reasoning—something current benchmarks might miss. So, it’s not that the 397B model isn’t smarter; it’s more like a specialist with depth in some areas but potentially weaker in general versatility.

Meanwhile, the smaller dense 27B excels in focused tasks like agentic coding and quick, coherent responses. It’s kind of like comparing a Swiss Army knife (small, versatile) to a huge toolbox filled with specialized equipment. For many day-to-day tasks, the smaller model feels more nimble and responsive.

A real-world example: think about a big corporation with thousands of employees (experts). While each department excels in very specific areas, sometimes a small, well-rounded team (a dense model) can move faster and solve most problems efficiently. The flip side is when you face a massive, complex challenge—say, planning a multinational product launch—where those specialists truly shine.

So yes, size matters, but context, architecture, and use case often matter more. Benchmarks capturing only surface-level skills don’t tell the whole story, and picking a model should be about fit as much as raw power.

Why Bigger Isn’t Always Better in Machine Learning

When you first hear that a 27-billion-parameter model can outperform a 397-billion-parameter behemoth, it sounds like heresy. Bigger models mean more capacity, right? Not quite. The key lies in understanding what the “bigger” model is actually doing—and what benchmarks we use to measure performance. The 397B model, often structured as a mixture of experts (MoE), shines in broad world knowledge and maintaining logical coherence over lengthy, complex passages. But it might stumble on more focused, agentic tasks like coding or detailed planning, where a dense 27B model can be more agile and precise.

Take, for example, a creative professional juggling complex projects—like Robbie on Reddit, who swore by Claude 4.6 for its slow, thoughtful reasoning and consistency. Upgrading to a newer, possibly larger model didn’t translate to better performance for their specific use case; it actually induced anxiety because the newer model hallucinated or drifted off-topic. This shows that sheer size doesn’t automatically deliver better alignment with human needs.

Another angle is how we evaluate these models. Benchmarks typically favor certain capabilities but barely scratch the surface of nuanced tasks like long-term planning or personalized reasoning. In other words, a smaller, denser model might “feel” smarter or more reliable in everyday usage even if it doesn’t pack as many parameters.

So, bigger is better—sometimes. But often, it’s the right architecture, optimization, and tuning that truly count.

Defining the 27B and 397B Models: Key Specifications

When comparing a 27 billion parameter model to a massive 397 billion parameter one, the specs alone don’t tell the whole story. The 27B model in question—like Qwen’s dense architecture—focuses on leveraging every parameter efficiently, avoiding the complexity and overhead of Mixture of Experts (MoE). Meanwhile, the 397B model generally incorporates MoE, allowing it to flexibly activate subsets of its experts depending on the task, which theoretically should boost performance on diverse or complex inputs.

But here’s the catch: more experts and bigger scale don’t automatically mean better all-around results. The 397B shines when it comes to world knowledge breadth and maintaining logical coherence over long document stretches—traits key for heavy-duty reasoning or multi-step planning. Yet, in more agentic or interactive coding tasks, which require nimble and precise logic, the 27B dense model often pulls ahead. This might feel surprising since sheer capacity usually offers an edge, but the trade-offs of managing multiple experts (like inconsistent activation or increased latency) can bottleneck real-time reasoning or nuanced task execution.

A real-world analog would be comparing a luxury SUV (the 397B model) built for rough terrains and heavy loads versus a nimble sports car (the 27B model) that excels in precision driving. Each has its domain, and depending on the journey, one might outperform the other despite the size or power difference.

Understanding these nuances is critical because benchmarks don’t always capture the kind of context-aware reasoning or fine-grained planning where smaller dense models can outperform their gargantuan siblings. So rather than size alone, it’s about the architecture, use case, and what kind of reasoning the model is optimized to handle.

Explanation of Parameters and Model Architecture

When people hear “27 billion” versus “397 billion” parameters, it’s tempting to assume the larger model should always dominate. But the reality is way more nuanced. The 27B model, often dense, means every parameter is active all the time, optimizing a consistent representation of knowledge. On the other hand, the 397B model typically uses a mixture of experts (MoE) architecture, where only a subset of those parameters — the experts — activate depending on the input. This conditional activation makes MoE more efficient in scaling but can introduce challenges in coherence and consistent reasoning across complex or long-context tasks.

It’s kind of like comparing a tight-knit all-star team (dense 27B) to a giant soccer league with many specialists who kick in only during specific plays (MoE 397B). The all-stars bring cohesion and steady performance, especially for tasks requiring clear, focused analysis. But the league, with its depth and diversity, shines in broader knowledge coverage and tackling complex, multi-step reasoning—something benchmarks may not fully capture yet.

A practical insight here is that task type heavily influences which model wins. For example, while a 27B dense model might excel at agentic coding—quick, precise problem-solving—an MoE giant is unbeatable at holding long, logical threads across pages of text, like planning a detailed research project. So, it’s not just about size but architecture and how the model is tuned for specific cognitive tasks. This explains why, despite its smaller size, the 27B model sometimes tops benchmarks where agentic coding or dense reasoning is key.

Typical Use Cases for Mid-Sized vs. Very Large Models

Let’s face it, bigger isn’t always better—at least not across every AI task. While a gargantuan 397B model often boasts a deep reservoir of world knowledge and dazzling long-form coherence, it’s not necessarily the go-to for every scenario. Community insights suggest these large models truly shine when you need complex reasoning or strategic planning stretched across long contexts—something benchmarks don’t always capture well.

On the flip side, mid-sized models like the 27B offering often punch way above their weight in focused, agentic tasks. Think coding assistants or tightly scoped workflows where agility, speed, and responsiveness matter more than encyclopedic knowledge. A smaller, dense model zeros in on core objectives without the occasional side steps or hallucinations a bigger, more sprawling model might introduce.

One user’s candid experience highlights this: relying on a mid-sized model felt like working with a thoughtful collaborator who respects subtlety and structure, whereas the bigger sibling would sometimes “run off script,” tossing in inaccuracies or erratic choices. The takeaway? If you’re building something that requires high precision and tuned interactions—like managing extensive, real-world projects or creative pipelines—the mid-sized model’s laser focus can be a blessing.

Real-world analogy: imagine a seasoned craftsman (27B) versus a sprawling factory (397B). The factory can handle massive production with diverse capabilities, but the craftsman’s hands are nimble and finely tuned for that intricate, delicate work that mass production struggles to replicate perfectly.

Performance Metrics Beyond Scale: What Really Matters

It’s tempting to think that a bigger model automatically means better performance, but the reality is way more nuanced. The 397B model does pack a punch when it comes to sheer knowledge and long-context coherence, especially on complex tasks that demand deep reasoning. Yet, some benchmarks—those quick tests we often worship—don’t catch the subtleties where large models shine. Meanwhile, smaller dense models like the 27B can sometimes outpace their massive counterparts simply because the evaluation isn’t measuring what truly matters in real-world use.

One crucial factor is *task specificity*. A user on Reddit shared a heartfelt experience with the Claude 4.6 model, praising its thoughtful, slower cadence that allowed her to organize decades of intricate work without hallucinations. Then, the subsequent upgrade sped up the model but reduced that careful control, leading to frustration and errors. It’s a vivid reminder that bigger or faster doesn’t always translate to “better” in practice.

So what’s really going on beyond raw size? Dense models excel in steady, reliable outputs for well-defined applications. MoE (Mixture of Experts) architectures, while larger, sometimes struggle with agility or maintaining context when the task requires nuanced iteration or creative reasoning.

In short, scale matters, but so does how you measure success. Forget just counting parameters; look closely at *what you want the AI to do*. That’s where the smaller 27B model can legitimately outperform a 397B behemoth—and why simply throwing more experts at a problem isn’t the surefire solution many assume.

Accuracy, Latency, and Efficiency Considerations

When comparing a 27-billion parameter (27B) dense model to a 397-billion parameter (397B) mixture-of-experts (MoE) model, it’s tempting to assume simply “bigger is better.” But that doesn’t always hold, especially if you look beyond raw parameter counts. The large MoE models excel at packing in world knowledge and handling complex, long-context tasks with logical coherence—something that smaller dense models might struggle with. Yet, the benchmarks we rely on often don’t capture these nuanced strengths.

Take user experiences on Reddit: people praised Claude 4.6’s thoughtful, slow-paced reasoning, which felt more precise and less prone to hallucinations than its successors or larger models. This shows fidelity to the task — aka accuracy — isn’t just a numbers game. Plus, larger models tend to introduce noticeable latency due to their sheer size and complexity, which can hinder responsiveness in real-time applications.

It’s also about where you’re deploying the model. For instance, agentic planning tasks lean heavily on larger models’ broader knowledge base and long-term reasoning. But for coding or content generation that benefits from snappy, coherent outputs, the 27B dense model may easily outperform a bulky 397B MoE in both speed and relevance.

A real-world analogy: it’s like having a massive encyclopedia versus a well-curated book. The encyclopedia has more info but takes forever to look up what you need, while the book gets you the essentials faster and often more accurately for the task at hand.

So the takeaway? Efficiency and the type of task dictate which model “wins”—and benchmarks don’t always tell the full story.

Real-World Benchmarks Comparing 27B and 397B Models

It might sound counterintuitive at first—how could a 27-billion parameter dense model hold its own, or even outperform, a whopping 397-billion parameter model that uses Mixture of Experts (MoE)? The key lies in what the benchmarks actually measure and the use cases targeted.

Most standard benchmarks today tend to favor dense architectures for tasks requiring quick, sharp reasoning or succinct context understanding. A dense 27B model can shine in these scenarios because all its parameters are actively involved at every step, giving it a coherent and consistent response style. Meanwhile, the 397B MoE beast, despite its massive scale, often excels in capturing broader world knowledge and excelling with complex logical coherence—especially over long contexts or multifaceted planning, areas current benchmarks don’t fully capture yet.

One user perspective from the Reddit community echoes this nuance poignantly. A devoted Claude 4.6 user found it invaluable for complex projects needing thoughtful, step-by-step reasoning, something that a newer, larger model variant couldn’t replicate without hallucinations or rushed outputs. This illustrates how sheer size doesn’t always translate to practical reliability or nuance—in many real-world workflows, coherence and understanding outpace raw parameter counts.

So, while a 27B model might appear “better” in quick-fire tests, the 397B’s value is often in arenas benchmarks miss: deep knowledge, planning, and extended reasoning. This disparity reminds us to look beyond numbers and understand the right tool for the right task.

In conclusion, the performance advantage of a 27-billion parameter model over a 397-billion parameter model underscores the critical importance of architecture efficiency, training optimization, and purposeful design rather than sheer size. Larger models often face diminishing returns due to increased complexity, greater computational demands, and challenges in effective training, which can hinder their practical applicability. In contrast, a well-tuned 27B model can leverage smarter parameter utilization, advanced training techniques, and task-specific fine-tuning to deliver superior or comparable results more efficiently. This highlights the evolving landscape of AI development, where quality and innovation in model design increasingly outweigh brute force scaling. Organizations and researchers should prioritize optimizing model architectures and training methodologies to achieve better performance, cost-efficiency, and scalability. Ultimately, understanding these nuances empowers the AI community to build more effective and accessible language models, driving forward advancements in artificial intelligence.